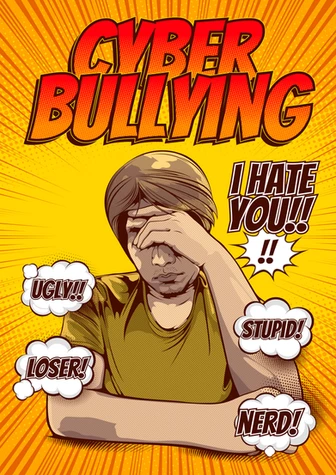

Social media’s ‘dark side’ has been increasingly visible to us all during these difficult times. From Covid conspiracy theorists to the torrent of online hate aimed mainly at black football players at the World Cup. It certainly seems as if social media itself is providing a space for anger and hatred.

According to a 2019 report by The Alan Turing Institute “…between 30-40% of people in the UK have seen online abuse (and) that 10-20% of people in the UK have personally been targeted by abusive content online.” Online abuse can take many forms, from fake news to death threats – along with racism, homophobia, sexism, the politicisation of health, child abuse content… the list can seem endless. Online safety and brand protection professionals, such as StrawberrySocial, are becoming increasingly vital in the battle to keep social media a safe space for individuals and organisations alike.

This topic formed the final panel discussion at the recent CharityComms conference – “Beyond the algorithm: social media for charities”. It stirred up a lot of chat, so much so that we were inspired to put together our our five top tips for dealing with the dark side.

1. Risk assessment

- List any possible subject issues that do or might affect your organisation. How could that manifest online (what phrases might be used, or visual assets) and who would be the people or organisations that target you?

- Assess the potential risk of trouble coming from within your company, or charity – including branch closures, redundancies and scandals amongst your employees.

- Audit and sense check your social media presence regularly, across each channel and including your advertising – be very clear on what your aim is.

- Set up a supportive and collaborative working environment for your social media manager and/or team. It can be a stressful and unrewarding job at times so ensure they have avenues of support available to them including access to counselling.

2. A digital comms hub

- Construct a central ‘crisis hub’ that details your online presence, channel by channel, aim by aim. This can be on an internal company system or on a cloud-based platform.

- It should contain a clear social media policy and cover both external comms as well as providing guidance to all personnel when it comes to social media accounts and what they put out there in the public domain.

- Include all the information needed for the day to day work on each channel (processes, workflows and lists are great).

- Include all details from your Risk Assessment exercise, including what to do (how to react) in each situation – i.e. depending on the nature and the level of the crisis.

- Provide signed off responses. House them in the hub along with instructions for use.

- Include all contact information for everyone involved – both in the company and in any agencies working with you. Make this part of an escalation workflow – by issue and time of day. And, who has sign-off / ultimate responsibility.

- Articulate your company / charity’s moderation best practices. Who are you? What guidelines ensure your audience know that they are in safe hands?

- Provide clear guidelines from the top for good comms between the team members.

- Share escalation workflows with your team – take them through them regularly and continually update.

- Use proven social media tools to monitor your social channels (i.e. Conversocial, SproutSocial, Sprinklr, etc.). Create a ‘papertrail’ so that you can respond with evidence to any complaints or emergency disclosures.

- Have a ‘gatekeeper’ for the hub – all changes to the contents (plus all ongoing reviews) need to be approved by them. They will also be the one/s to make the edits.

3. Train your team.

- Talk to them about empathy and understanding – provide real examples of how well things can be handled, alongside showing them what not to do.

- Clarify when it is better not to engage, when to hide content, when to block and when to escalate.

- Have effective internal comms for team members to ask for second opinions.

- Ensure they understand the social media policy and hub contents.

- Choose internal systems (Teams, WhatsApp, Google Chat) where everyone involved can chat and monitor issues. Detail how these comms channels should be used and by whom.

4. Protect your team’s mental health.

- Have a clear support process that lists the help available to the team. This can range from recommendations on how to monitor and proect their own mental health (walking, regular breaks, talking with peers) to support available via counselling and other departmental resources.

- Mental health charity Mind has a wide range of useful resources to choose from, one that kept cropping up time and again when we asked was its taking care of yourself advice.

- Our super clients at Samaritans have a useful wellbeing in the workplace online learning resource that’s worth checking out.

- Have someone on the team or in the HR department who can be approached anonymously when people need advice or support.

- Have a central team chat where people can talk about something that has upset them – working from home can be lonely so make sure there is always someone to reach out to.

- Make sure your team takes breaks from work, and prompt them to clearly separate their working area from the rest of their house and everyday life.

5. Campaign for change.

- The argument that social media platforms allow too much anonymity has really taken hold. It’s time to join the campaign for greater transparency, responsibility and fit-for-purpose consequences.

- Let’s work towards ensuring that social networks quickly are taking down content that breaks rules but are doing this in an accurate way too so as to avoid accusations of bias and criticism re incompetence. Do check that your team has all the contact information for each channel in use so as to be able to effectively raise reports with them.

- Safeguards for children’s rights to privacy and safety need to be improved, at government level.

- Press for improved accuracy in filtering of content.

Finally: setting rules in such a rapidly changing world may not always be the right thing to do… it may not even be possible. But, discussing them constantly is vital. It’s only through talking about what isn’t working that we can strategise for our next stage. Frequently there is no straightforward right or wrong (despite what the ‘shouters’ say), but there is the constant need to assess, consider and improve.

We all have the power to change things for the better but it needs to be done together – and this includes holding the large social channels to account. Let’s encourage them to do better, for us and for future generations.

August 2, 2021

August 2, 2021  Share This Post

Share This Post